A complete guide to decentralized storage, explaining how it works, top protocols, market potential, risks, and why it matters.

Author: Chirag Sharma

Every file you upload to the cloud feels personal. Your photos, your contracts, your backups. Yet technically and legally, that data lives on infrastructure owned by someone else. When you store a document in Google Drive, Dropbox, iCloud, or Amazon S3, you are not storing it on your own hardware. You are renting access to a server operated by a centralized entity. That entity controls uptime, pricing, access permissions, and data retention policies. Access is not ownership. This structural reality creates a tension that most users rarely confront. Centralized cloud infrastructure is convenient and efficient. However, it is also concentrated. Today, a handful of hyperscalers control the majority of global cloud infrastructure. When such a large percentage of the world’s data flows through a few corporate networks, questions of resilience and sovereignty naturally emerge. This makes a strong case for the introduction of decentralized storage

Centralization introduces single points of failure.

Over the past decade, high-profile surveillance revelations, data breaches, and sudden platform bans have made this reality visible. Users increasingly understand that centralized infrastructure optimizes for efficiency and profitability, not necessarily for user sovereignty.

This is the environment in which Decentralized Storage emerged.

Decentralized Storage is not simply a technical upgrade. It is a structural response to concentrated control over digital infrastructure.

The philosophical roots trace back to early peer-to-peer networks. Systems like BitTorrent demonstrated that files distributed across thousands of nodes could remain accessible even if individual nodes went offline. The architecture worked. What it lacked was a sustainable economic layer.

Blockchain technology introduced that missing incentive model.

Decentralized Storage distributes data across a network of independent nodes instead of housing it in centralized data centers owned by a single entity.

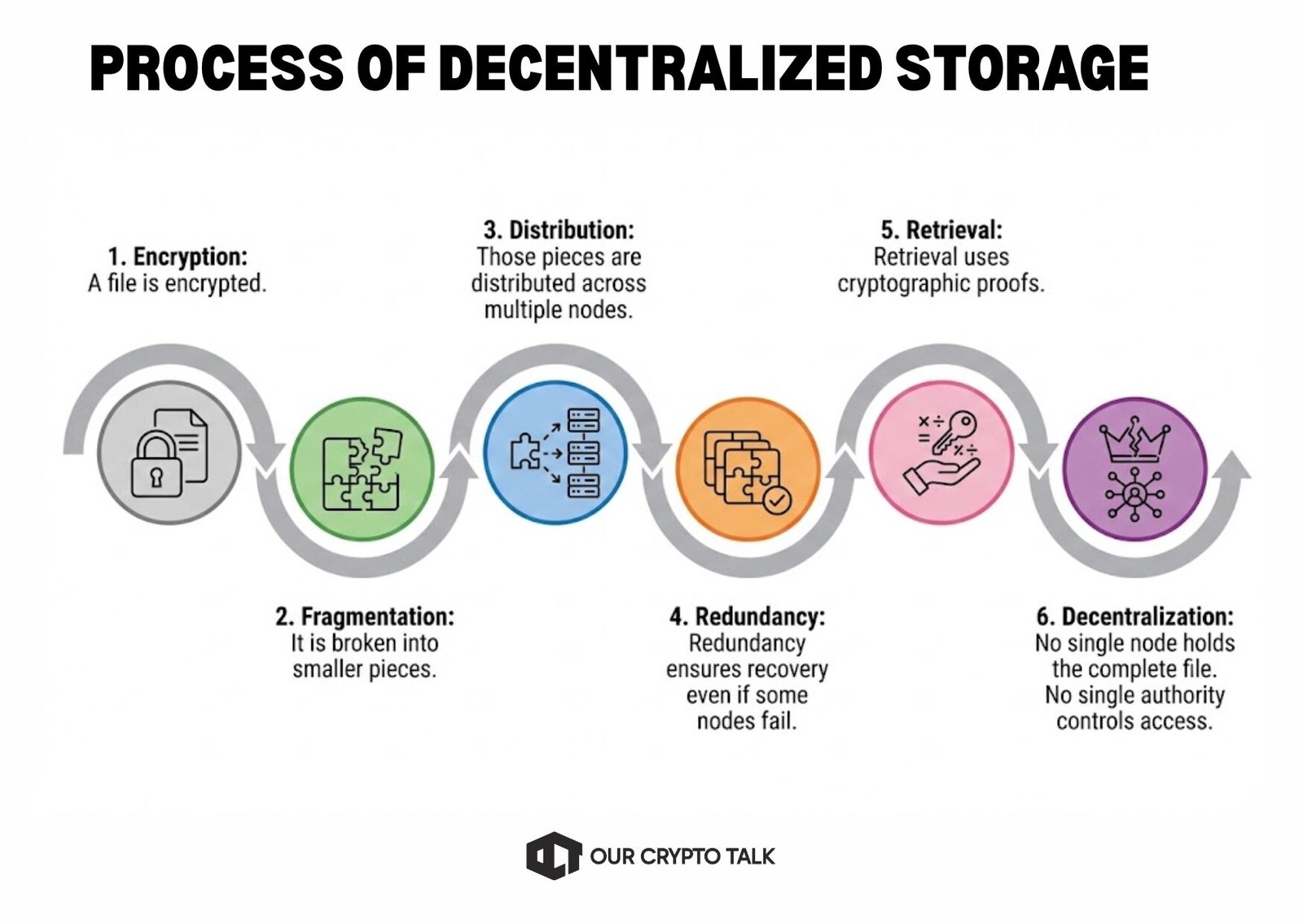

The process typically works as follows :

No single node holds the complete file. No single authority controls access.

This architecture introduces several structural properties that centralized systems cannot replicate by definition.

Because data exists across many nodes globally, removing access requires disabling large portions of the network. There is no central server to seize or shut down.

Files are replicated across multiple nodes. If one node goes offline, others still hold recoverable fragments. This reduces catastrophic loss risk.

Most decentralized storage protocols encrypt files before distribution. Storage providers host encrypted fragments without knowing the underlying content.

Instead of relying on fixed pricing from a centralized provider, decentralized storage creates an open marketplace. Node operators compete to provide storage, driving price discovery through supply and demand.

The breakthrough moment came when blockchain-based incentive layers were added. Tokens created an economic loop:

This transformed peer-to-peer storage from a voluntary experiment into a functioning global market.

Decentralized Storage therefore combines:

The result is not simply cheaper storage. It is storage that aligns with Web3’s broader principles: permissionless access, censorship resistance, and user sovereignty.

The rise of Web3, NFTs, decentralized finance, and on-chain governance has intensified the need for infrastructure that matches trust assumptions.

It makes little sense to build decentralized financial systems while storing their front-end interfaces on centralized servers vulnerable to takedown. Similarly, NFT metadata stored on centralized hosting services undermines the permanence of the asset itself.

The NFT boom exposed this contradiction clearly. When high-value NFTs pointed to images stored on centralized servers, the risk became obvious: if the server disappeared, the NFT lost its functional content.

Decentralized Storage became the backbone for solving that problem.

More recently, artificial intelligence has introduced another demand vector. Training datasets, model weights, and inference outputs require massive, verifiable storage systems. The debate over who controls AI data has elevated decentralized storage from niche infrastructure to strategic necessity.

In this context, Decentralized Storage is no longer just an ideological alternative. It is emerging as core infrastructure for digital permanence and sovereign data rights.

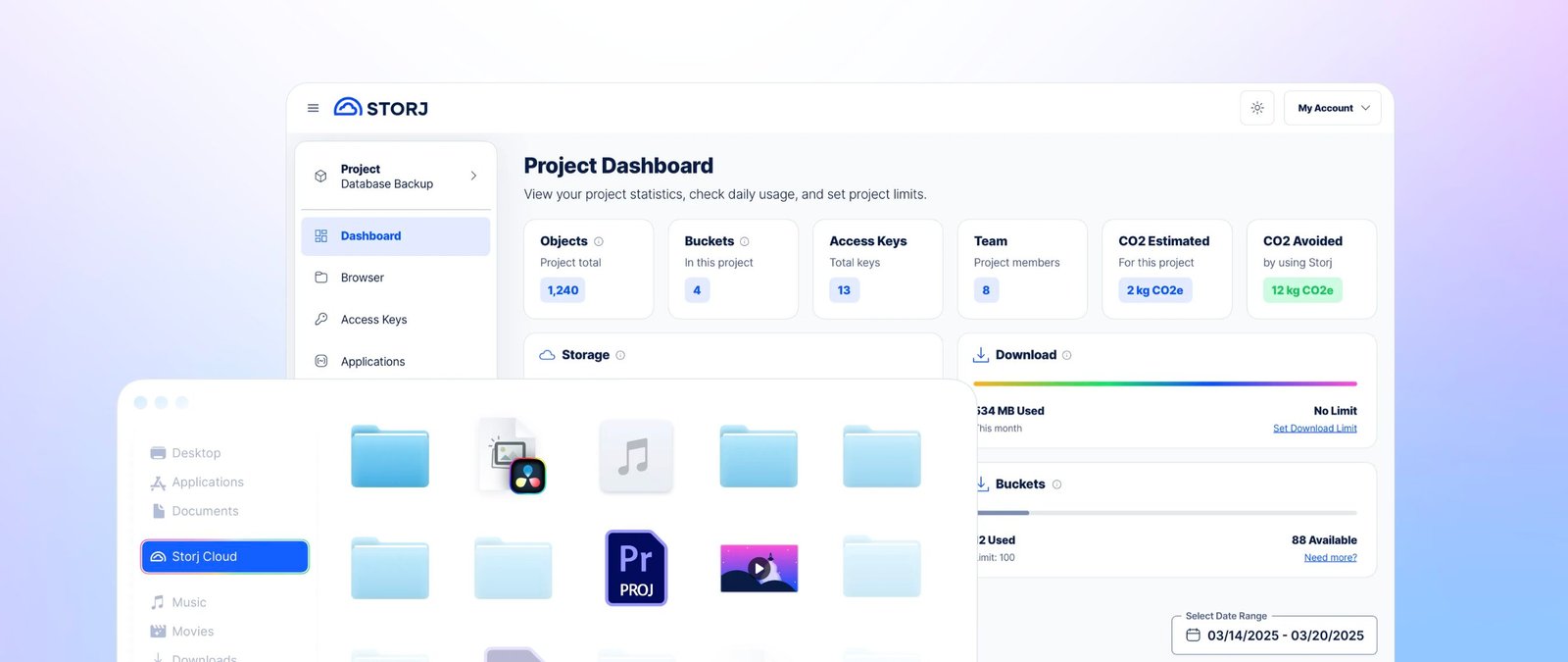

One of the earliest serious implementations of Decentralized Storage came from Storj, launched in 2014.

Storj took a pragmatic approach. Instead of attempting to reinvent the internet, it focused on being a drop-in alternative to Amazon S3. Developers could integrate Storj with existing tools, store files in a decentralized network, and access them through familiar APIs.

The core model was simple:

Storj proved something critical: decentralized storage could be enterprise-grade. It could offer competitive speeds, reliability guarantees, and predictable pricing. More importantly, it showed that token-incentivized storage markets could function commercially rather than purely ideologically.

This shifted decentralized storage from philosophical experiment to viable infrastructure.

While Storj focused on replacing cloud storage, IPFS aimed to redesign how the internet itself locates data.

Traditional web architecture is location-based. A URL points to a specific server. If that server goes down, the content becomes inaccessible.

IPFS introduced content-addressing.

Instead of locating data by where it lives, IPFS locates it by what it is. Each file receives a cryptographic hash derived from its content. If the content changes, the hash changes. This creates a tamper-evident, distributed addressing system.

The implications are profound:

IPFS became foundational to Web3. NFT metadata, decentralized app assets, and governance records often rely on IPFS as their storage layer.

However, IPFS itself did not include an economic incentive model. That came through its companion network.

Filecoin built on IPFS by introducing token-based incentives. It launched its mainnet in 2020 and created a marketplace where storage providers compete to host data in exchange for FIL tokens. The protocol verifies storage through cryptographic proofs:

These proofs ensure that storage providers genuinely store the data they claim to host.

Filecoin’s scale is notable. It accumulated exabytes of storage capacity, making it one of the largest decentralized storage networks globally.

Beyond raw storage, Filecoin began exploring “compute-over-data,” enabling computations directly on stored datasets. This is particularly relevant for AI use cases, where moving large datasets is inefficient.

Filecoin marked a turning point. Decentralized storage was no longer experimental. It was measurable, scalable infrastructure.

While most decentralized storage protocols focus on renting storage capacity, Arweave took a radically different approach.

Arweave introduced the concept of permanent storage.

Users pay a one-time fee to store data indefinitely. The protocol uses an economic endowment model, where upfront payments are invested to fund long-term storage costs.

Its architecture, known as blockweave, ensures that each new block references previous blocks randomly, incentivizing long-term data preservation.

Arweave’s “permaweb” became popular for:

The NFT boom accelerated Arweave adoption. When millions of dollars in digital art relied on off-chain images, permanence became essential.

Arweave demonstrated that decentralized storage is not just about cost efficiency. It is about digital permanence.

Sia created a marketplace model where renters and hosts negotiate storage contracts directly. Smart contracts enforce uptime and payment terms, with the blockchain acting as neutral arbiter.

Sia focused on granular contract-level flexibility. Hosts and renters interact transparently, with penalties for failing to meet performance guarantees.

Meanwhile, Chia Network approached storage from a consensus perspective. Chia’s proof-of-space-and-time mechanism incentivized large-scale storage capacity globally. Although not strictly a storage marketplace protocol, it demonstrated how storage itself could become a foundational consensus resource.

Together, these networks expanded the design space of decentralized storage:

The early years of decentralized storage faced skepticism.

Performance lagged behind centralized hyperscalers. User experience was complex. Token volatility created unpredictable pricing for enterprise users.

The breakthrough came during the NFT boom of 2021.

When high-value NFTs referenced centrally hosted assets, the fragility of the model became obvious. Decentralized storage was no longer ideological. It became necessary.

Simultaneously, the broader Web3 ecosystem matured:

Decentralized storage became infrastructure, not optional tooling.

More recently, AI has added another catalyst. Large language models and decentralized compute frameworks require massive datasets stored transparently and securely. Filecoin and others began positioning decentralized storage as the data backbone for decentralized AI.

The narrative shifted from privacy and censorship resistance to strategic infrastructure.

The addressable market for Decentralized Storage is enormous.

Global cloud infrastructure spending surpassed $100 billion annually in recent years, and data generation continues accelerating. Every year, more enterprise applications migrate to the cloud. AI systems require larger datasets. Individuals generate more digital content than the year before.

Even capturing a small percentage of that market would represent massive growth for decentralized storage networks.

However, the opportunity extends beyond price competition with hyperscalers.

Governments and institutions increasingly question where their data resides and who ultimately controls it. When national infrastructure depends on servers owned by foreign corporations, sovereignty becomes more than a philosophical issue.

Decentralized Storage offers a structurally different model:

This is not merely about cost. It is about control.

Healthcare data is fragmented across providers. Patients rarely control lifelong access to their own records. A decentralized storage layer could enable encrypted, patient-controlled medical data accessible across institutions without centralized custodianship.

Such use cases require strong privacy guarantees and regulatory clarity. However, the underlying architecture of decentralized storage aligns well with these needs.

Centralized platforms come and go. Websites disappear. Services shut down. Data vanishes.

Protocols like Arweave demonstrated that permanence can be engineered into infrastructure itself. Digital artifacts, governance records, and cultural documents can be stored in ways that outlive companies.

This introduces a new category of infrastructure: storage not optimized for quarterly earnings, but for generational durability.

The intersection between decentralized storage and artificial intelligence may prove transformative.

AI systems require:

Today, the majority of AI training data is controlled by large corporations. Decentralized Storage introduces an alternative model where datasets are transparently stored, verifiably contributed, and potentially compensated through token-based mechanisms.

Projects exploring compute-over-data aim to run computations directly on stored data without extracting it first. If successful, this would reduce inefficiencies and create entirely new economic models for data markets.

In this scenario, decentralized storage becomes not just a storage solution but a foundation for decentralized AI ecosystems.

Despite its promise, Decentralized Storage faces real structural challenges.

Historically, decentralized retrieval has been slower than centralized CDNs. While improvements in caching layers and routing have narrowed the gap, latency-sensitive applications still favor hyperscalers.

For mainstream adoption, performance must meet or exceed centralized benchmarks.

The complexity of private keys, wallet integrations, and token payments creates friction. Most users do not want to manage cryptographic keys or worry about token volatility.

For decentralized storage to scale, abstraction layers must hide complexity completely. The best solutions will feel indistinguishable from traditional cloud services at the user interface level.

Token-based incentive systems operate within evolving regulatory environments. Classification of storage tokens, compliance obligations, and jurisdictional enforcement remain unresolved in many regions.

Heavy-handed regulation could slow adoption, particularly in enterprise markets.

Censorship resistance is both strength and challenge.

Decentralized networks cannot easily remove illegal or harmful content. The same properties that protect dissidents can also protect malicious actors.

Communities and protocol designers must navigate this tension carefully without undermining core decentralization principles.

AWS, Google Cloud, and Azure continue innovating aggressively. They have adopted distributed redundancy, encryption at rest, and advanced security controls.

However, they cannot offer true censorship resistance or eliminate centralized ownership. The differentiation for decentralized storage lies precisely in those structural properties.

Decentralized Storage began as a philosophical response to concentrated control over digital infrastructure. It matured into a technically viable and increasingly enterprise-ready alternative.

Projects such as IPFS, Filecoin, Arweave, and Storj have demonstrated that distributed, encrypted, incentive-driven storage networks can function at scale.

The forces driving adoption are not temporary narratives.

The question facing decentralized storage is not whether it has relevance. It is how quickly it can refine performance, user experience, and regulatory clarity.

If those challenges are solved, Decentralized Storage will not merely compete with centralized cloud providers. It will redefine what ownership means in the digital age.