Top 10 AI Crypto Tokens ranked by market cap in 2026. See which projects lead in agents, compute, inference, and data, with full analysis.

Author: Akshat Thakur

The AI crypto market just crossed $17 billion. That number signals a shift. This sector is no longer running on narrative alone. What changed is simple. Projects are now shipping real infrastructure. Compute networks, data layers, autonomous agents, and on-chain machine learning are moving from concept to usable products. And for the first time, some of these systems are starting to compete with centralized AI in specific niches. The Top 10 AI Crypto Tokens are no longer driven by hype.

At the same time, rankings are no longer stable. Early leaders are getting challenged. Some have been overtaken. The difference now comes down to execution. A few developments explain why this shift happened so quickly.

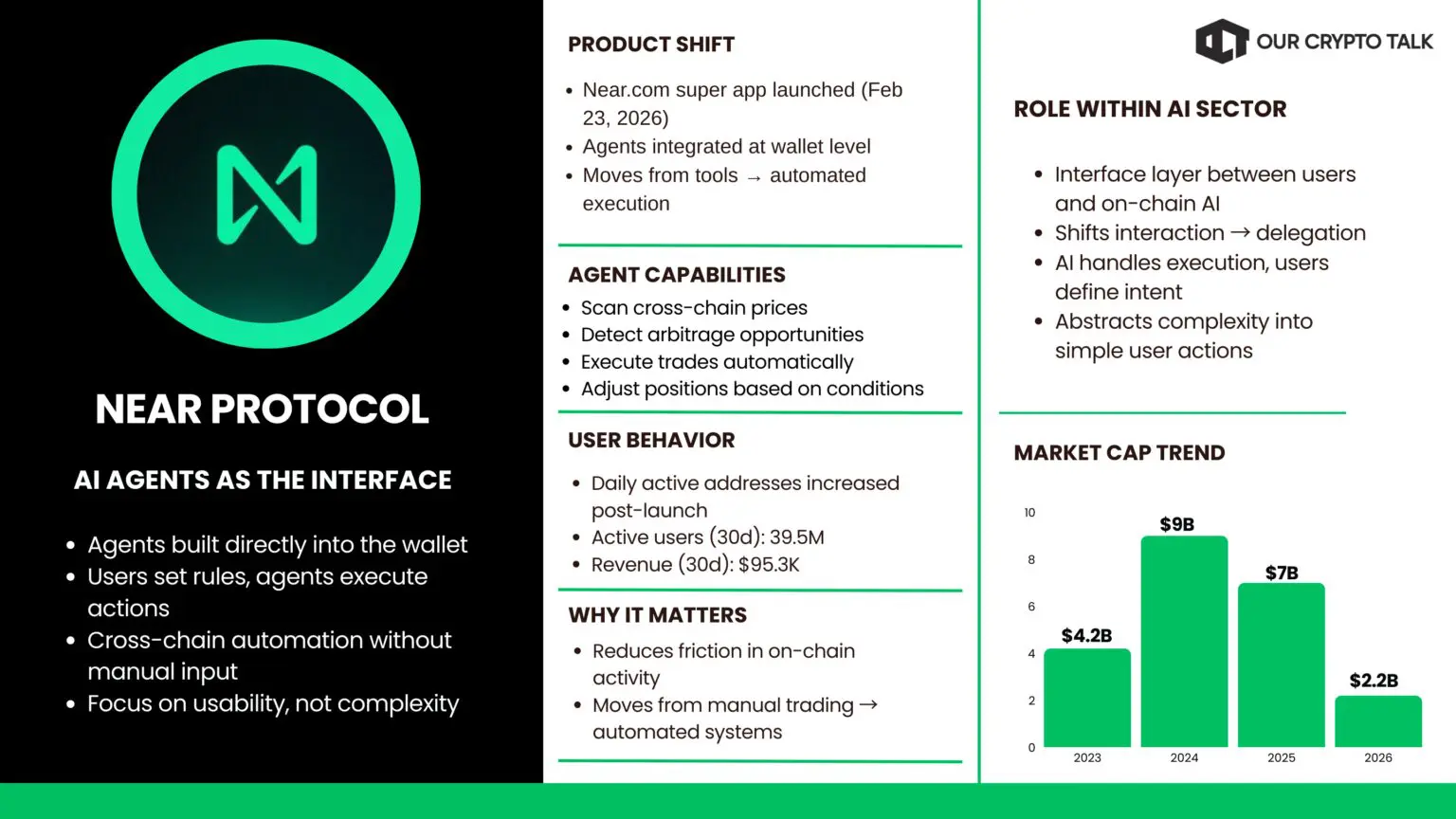

On February 23, 2026, NEAR launched Near.com, a consumer-focused super app that integrates AI directly into the user experience. It brings wallets, cross-chain transactions, private interactions, and AI-driven interfaces into one place. The key change is usability. NEAR is trying to make crypto feel like a normal app.

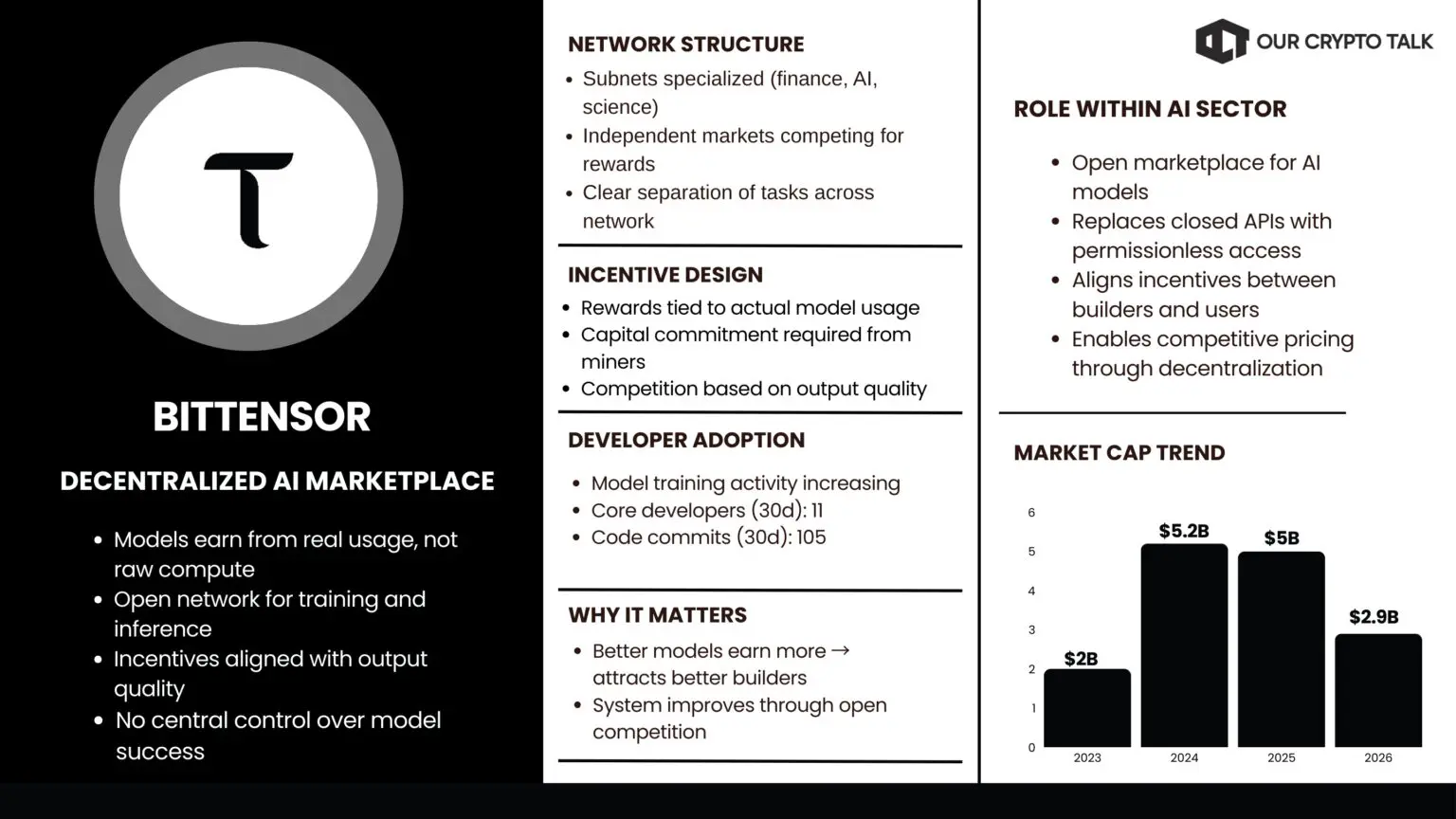

Bittensor is taking a different route. Its v2 upgrade refined how subnets operate and how contributors are rewarded. More importantly, it strengthened a new model where AI systems compete directly on-chain. Models are no longer static outputs. They become participants in an open market.

All of this points to the same conclusion. Artificial Intelligence crypto is entering an execution phase. This ranking of the top 10 AI crypto tokens is based strictly on market cap data from platforms like CoinMarketCap and CoinGecko as of March 2026. No opinions or projections influence the order. Just current valuations, reflecting the state of AI as it stands today.

The goal is straightforward. Show where capital is concentrated today, and then break down why these projects are holding those positions.

This list is based on one thing. Market cap. All rankings come from live data on CoinGecko as of March 17, 2026, with cross-checks against CoinMarketCap to avoid errors during price swings. No blended scoring. No subjective weighting. Just the total value the market assigns to each project right now.

Market cap works because it captures both price and circulating supply in one number. Market cap shows where capital is actually sitting across the Top 10 AI Crypto Tokens. In a sector like AI crypto, where narratives can move fast, this keeps the ranking grounded in reality.

That said, not every token made the cut. We filtered for projects with real AI functionality. That means active infrastructure, not just branding. Each project needed to show working components like decentralized compute, machine learning models, autonomous agents, or AI-focused data layers. We checked live products, network activity, and development signals to confirm that the tech exists beyond whitepapers.

Anything riding the AI label without substance was excluded. Memecoins, rebranded tokens, and projects with no real integration were removed from consideration.

The result is simple. A clean snapshot of where capital and execution meet across the Top 10 AI Crypto Tokens today. No hype. No projections. Just current leaders based on real data.

Inference is where AI produces output. It is the step where a model processes input and returns an answer. Decentralized inference spreads that work across many nodes instead of relying on a single provider. The network evaluates outputs, rewards the best performers, and improves over time through competition.

Agent systems build on top of that. They take model outputs and act on them. They execute trades, monitor conditions, and manage workflows without constant input. Early 2026 made the split clear. Some projects focused on inference. Others focused on agents. The ones that combined both created usable systems. Several of these tokens like Bittensor & ASI Alliance now dominate the Top 10 AI Crypto Tokens because they combine usable inference with real automation.

Inference networks reduced cost and improved access. Agent systems turned that into automation. Users no longer interact with tools. They delegate tasks. This split created a clear hierarchy. Projects focused only on raw compute held their ground. Projects that combined inference with usable agent systems moved up fast.

$TAO leads the inference category with a $3B market cap. Bittensor v2 fixed the part that actually matters. Incentives. Before the upgrade, the network looked promising but inconsistent. Subnets lacked structure. Miners could game rewards. Latency made real usage difficult. v2 introduced tighter mechanics. Subnet stake burn forced miners to commit capital. dTAO changes made rewards more precise. Models now earn based on outputs users actually use, not just compute contributed.

The network reorganized fast. Subnets specialized into clear categories like finance, creative generation, and scientific workloads. Latency on common tasks dropped sharply, often by more than half within the first month.

That shift changed behavior. Active miners increased steadily. Model training volume expanded as subnets started handling workloads that used to require dedicated GPU clusters. Developers who once tested Bittensor began routing production traffic through it because the economics finally made sense.

The stake-burn rule removed most of the low-quality participation. Miners now compete on output quality instead of volume. That single change pushed the network toward consistent performance.

The system improves through competition. Better models earn more. That attracts better contributors. No central team decides which model wins. This is where Bittensor stopped being a research network. Projects building agents or DeFi tools now use its subnets as their backend instead of running their own infrastructure.

Centralized AI providers are raising prices and restricting access. Bittensor offers the opposite model. Open participation, competitive pricing, and privacy built into the network. Read our full Bittensor review.

$NEAR, at a $1.8B market cap, is positioned around agent-focused infrastructure. NEAR focused on the agent layer and solved usability. The Near.com super app launched on February 23, 2026 with agents built directly into the wallet. These agents scan cross-chain prices, detect arbitrage, execute trades, and adjust positions without constant input.

The change is simple. Users set the rules once. The agent handles execution. That reduced friction shows up in the data. Daily active addresses jumped within days of launch. Agent-triggered transactions increased multiple times in the first month. Users spent more time in the app because it was actively doing work for them.

Developers moved quickly as well. GitHub activity increased as builders used the super app as a distribution layer. They can deploy custom agents into an environment that already has users.

This changed how NEAR fits in the market. It is no longer competing as just another layer 1. It acts as a direct interface between users and on-chain AI. The key shift is practical use. People are not interacting with tools. They are delegating tasks.

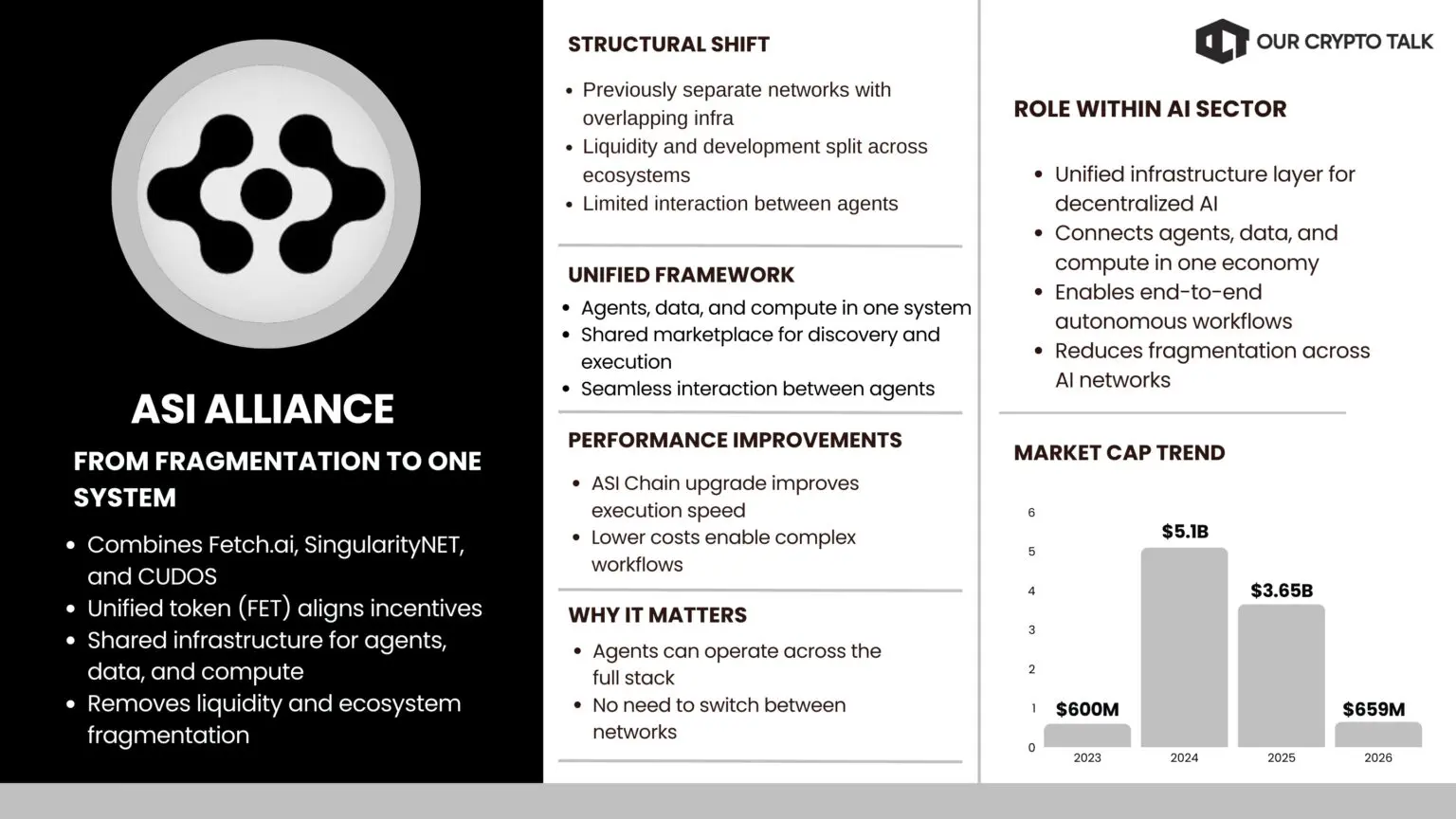

The ASI Alliance sits in the agent category with a $524M market cap. The ASI Alliance removed fragmentation. Before the merger, Fetch.ai, SingularityNET, and Ocean Protocol operated separately. Infrastructure overlapped. Liquidity was split. Agents struggled to interact across systems.

The ASI Alliance, formed from the merger of Fetch.ai, SingularityNET, and Ocean Protocol (with CUDOS joining later). The unified framework changed that. With FET as the core token, agents, data, and compute now operate inside one system. Agents can discover each other, access resources, and complete tasks within a shared marketplace.

New tools like ASI:Create lowered the barrier to entry. Developers can launch agents with minimal setup. The ASI Chain upgrade improved execution speed and reduced costs, which made more complex workflows viable.

The impact showed up in activity. Agent transaction volume increased steadily. Staking participation grew as incentives aligned under one token. Liquidity improved across the ecosystem. Developers no longer need to split effort across multiple networks. They build once and access the full system.

This turned separate projects into a single environment where agents can operate end to end. An agent can source data, execute actions, and settle transactions within one framework. The shift here is structural. The alliance did not just combine projects. It created a working system where agents can scale without friction. Read our full ASI review.

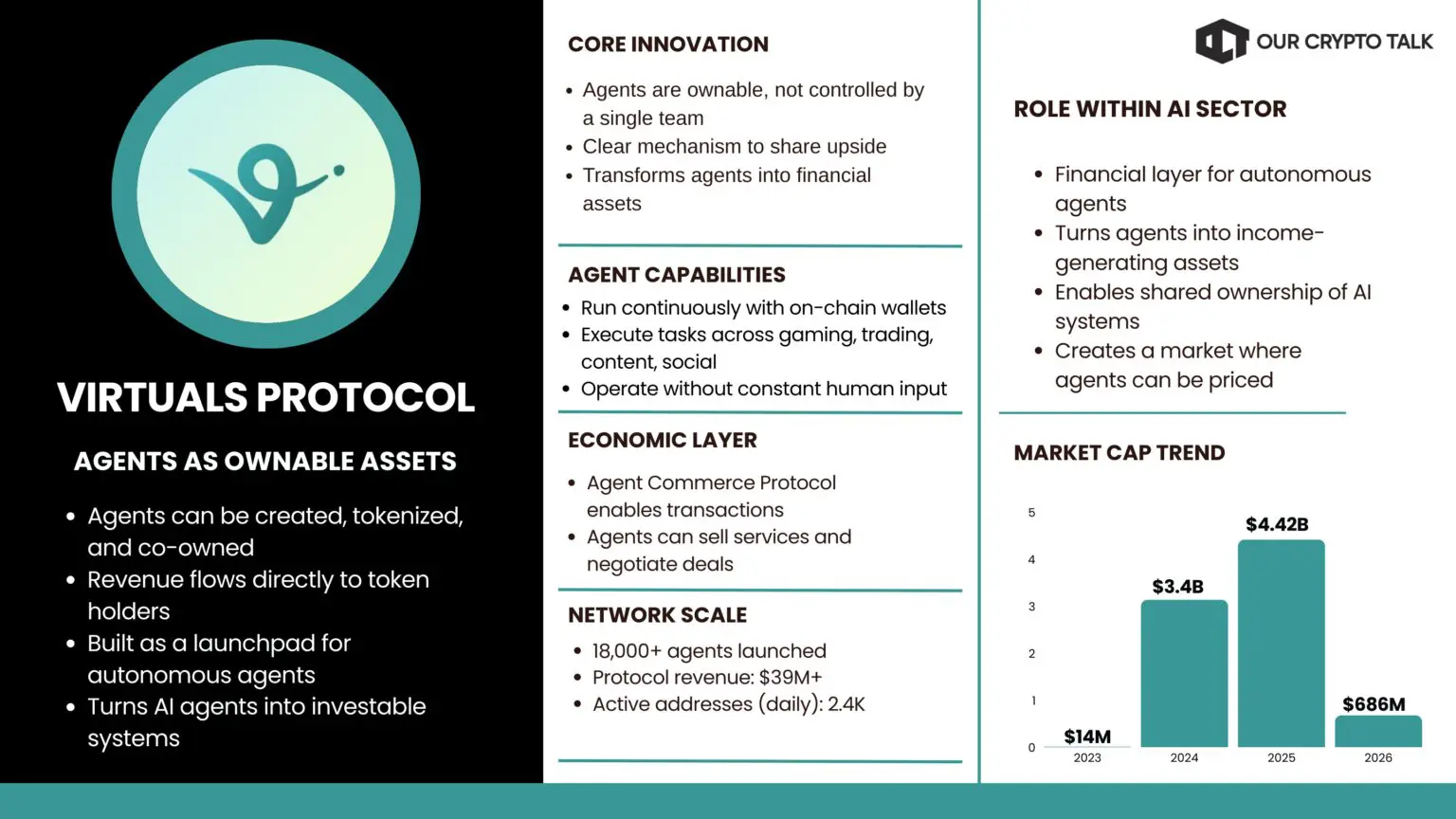

$VIRTUAL operates as an agent platform, currently valued at $519M. Virtuals Protocol did something most AI projects avoided. It made agents ownable and investable.

Before this, agents were either demos or controlled by a single team. There was no clear way to share upside. Virtuals fixed that by building a full launchpad where anyone can create, tokenize, and co-own autonomous agents.

These agents run continuously with on-chain wallets. They execute tasks across gaming, trading, content, and social platforms. Revenue flows directly to token holders.

The real shift came with the Agent Commerce Protocol and the Revenue Network launch in February 2026. Agents can now sell services, negotiate deals, and transact with other agents without human involvement. That turned them from tools into economic actors.

The scale is not theoretical. According to Virtuals Protocol’s official announcement, the network has over 18,000 agents, making it one of the largest on-chain agent ecosystems (self-reported by the project via PR Newswire). Revenue has also scaled alongside usage. Third-party analytics platforms like DefiLlama and Token Terminal estimate cumulative protocol revenue at roughly $69 million as of March 2026.

Developers no longer need to rely on VC funding. They can raise capital by selling ownership in the agent itself. Users are not just speculating. They are buying into systems that generate income.

Virtuals solved the ownership problem. That is what moved it from narrative to something the market can price.

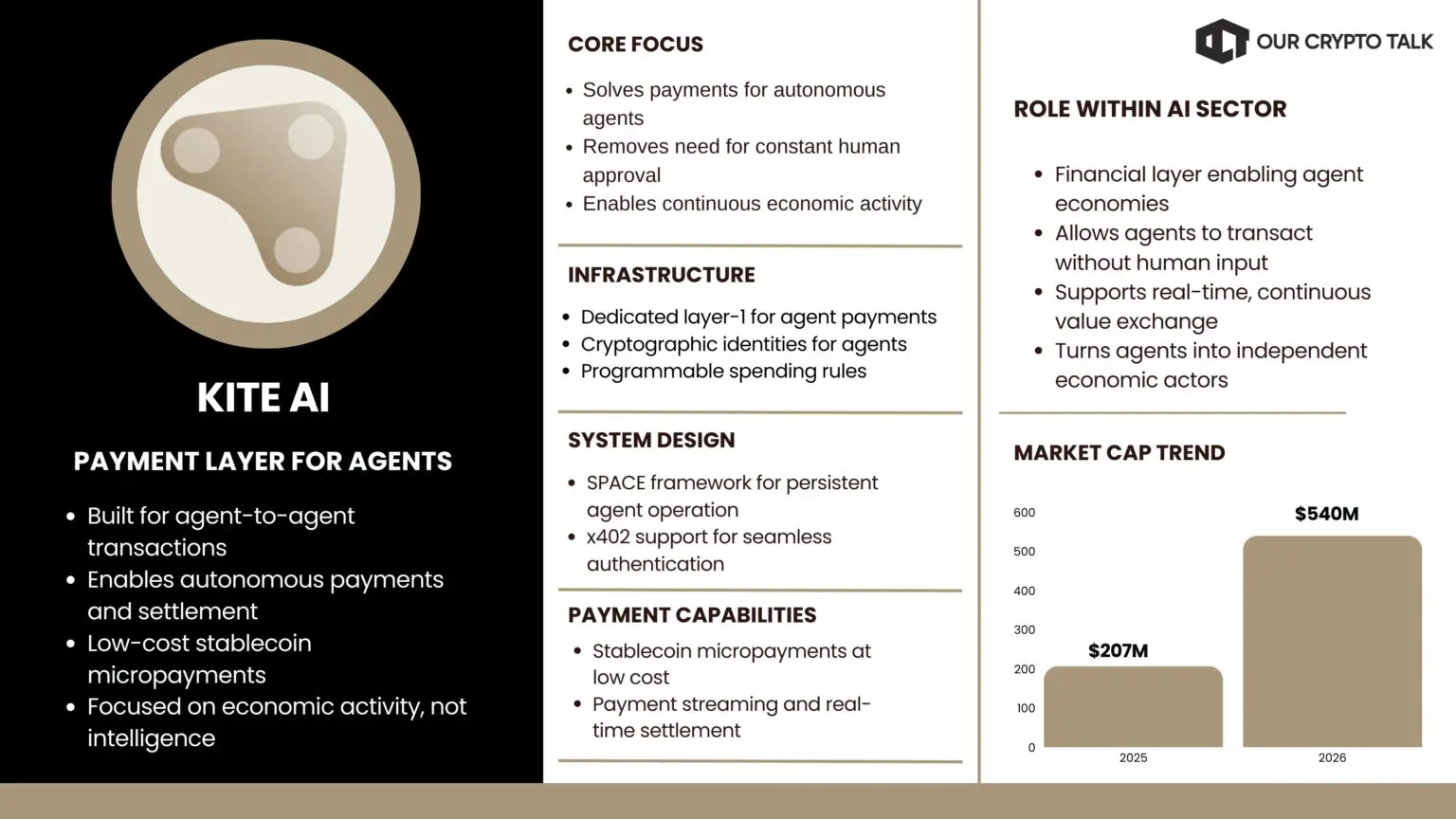

$KITE, a $378M project, focuses on agent infrastructure at the Layer 1 level. Kite AI focused on a part most projects ignore. Payments. Agents cannot function if every action requires approval or expensive transactions. They need a system where they can pay, receive, and settle value on their own.

Kite built a layer-1 specifically for that. Agents get cryptographic identities, programmable spending rules, and access to stablecoin micropayments at very low cost.

The SPACE framework and x402 support allow agents to authenticate once and then operate continuously. They can open channels, stream payments, and settle transactions with full audit trails.

Once mainnet stabilized in early 2026, activity picked up quickly. Agent-to-agent transaction volume increased as developers started building payment-heavy use cases. Real-time content streaming, automated marketplaces, and machine-to-machine commerce became viable.

Proof of Artificial Intelligence ties rewards to useful activity. The network does not reward idle participation. It rewards agents that actually do work. Kite is not trying to compete on intelligence. It handles the layer that lets agents behave like real economic participants.

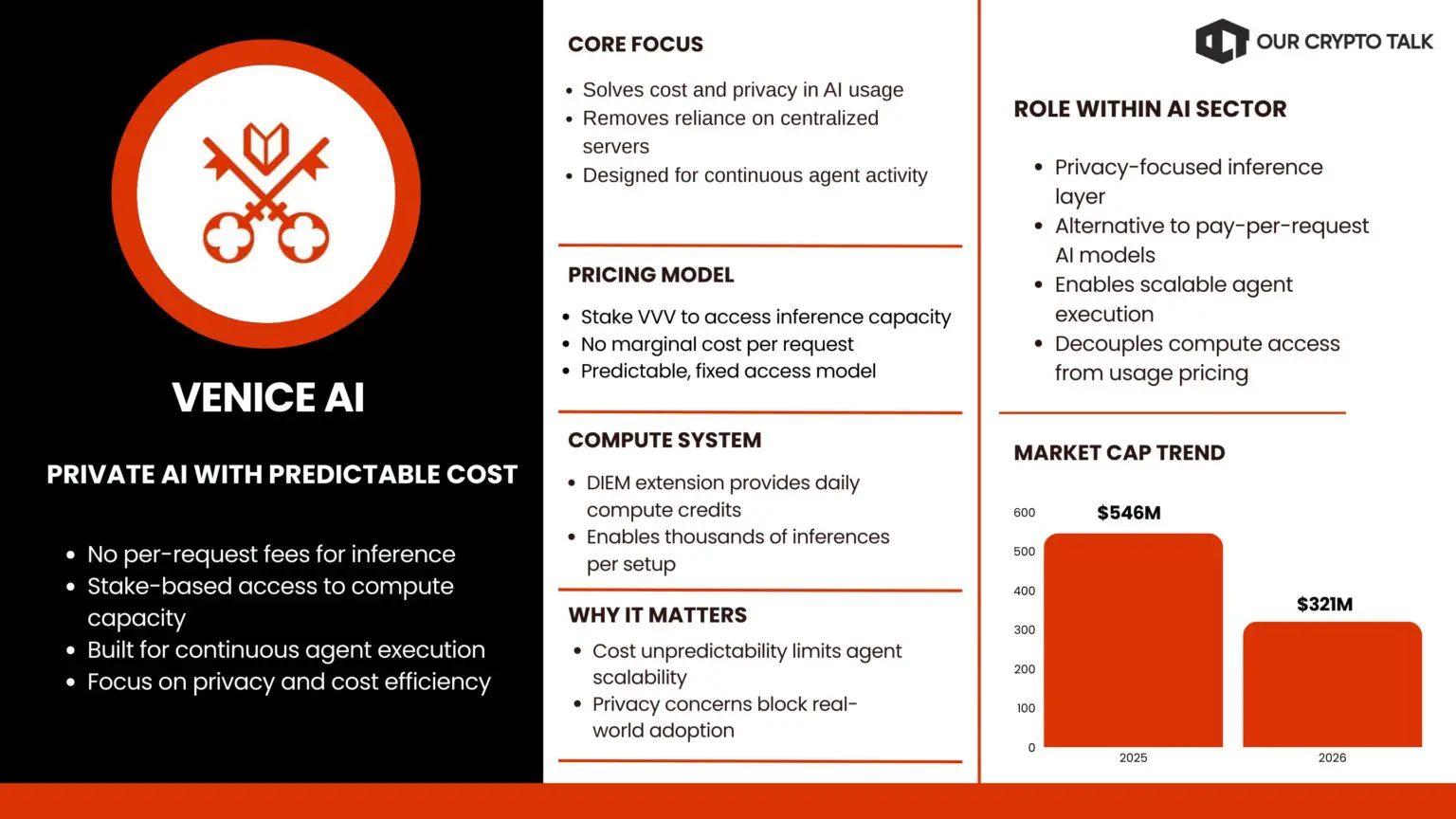

$VVV is part of the inference layer, with a market cap of $258M. Venice AI focused on two problems that matter in practice. Cost and privacy. Most AI systems require sending data to centralized servers and paying per request. That model breaks for agents that need to run continuously.

Venice replaced it with a staking model. Stake VVV and you get a share of the network’s inference capacity. No per-request fees. Just predictable access. The DIEM extension turns that capacity into daily compute credits. Once an agent is set up, it can run thousands of inferences without worrying about marginal cost.

Adoption followed. Over 2 million users registered, with around 50,000 active daily users ( self-reported by Venice). Developers integrated the API into production workflows because it removes both cost uncertainty and data exposure.

Agents benefit the most. They can operate continuously without leaking data or hitting usage limits. Venice does not try to replicate centralized models. It changes the pricing and privacy structure entirely.

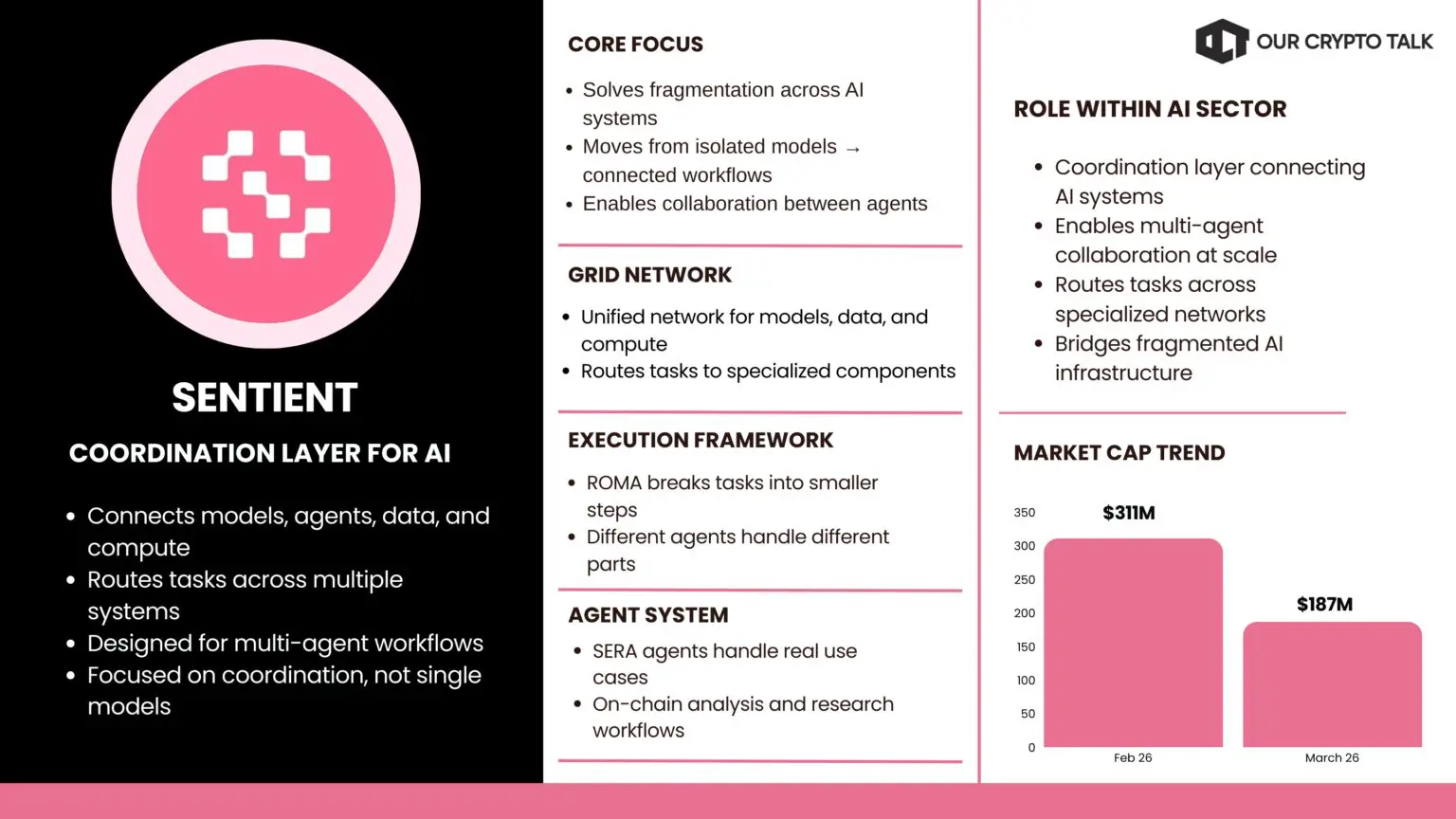

$SENT fits into the agent coordination category and carries a $158M valuation. Sentient focused on coordination. Most systems today operate in isolation. One model handles one task. That limits what agents can actually do. Sentient’s GRID connects models, data, agents, and compute into a single network. Instead of relying on one system, it routes tasks across multiple specialized components.

The ROMA framework breaks complex problems into smaller steps handled by different agents. The results remain verifiable and community-owned. SERA agents already handle use cases like on-chain analysis and research without common issues like hallucination.

In early 2026, activity on the GRID increased as more developers started building on top. The open architecture allows contributors to add models or data and earn rewards without building an entire system.

Sentient is not focused on a single use case. It is building the coordination layer that lets multiple systems work together.

These four projects fill the gaps the first wave exposed. Virtuals made agents investable. Kite gave them the ability to transact. Venice made inference predictable and private. Sentient made coordination possible. Together, they push the space from isolated tools toward systems that can operate on their own. Read our full Sentient review.

AI costs are rising faster than most teams can handle. GPUs are scarce, cloud bills keep climbing, and access gets throttled when demand spikes. That pressure forced a shift.

Decentralized compute flips the model. Instead of relying on a few providers, it pulls hardware from a global pool of independent operators. Anyone with spare GPUs or servers can supply capacity. Users tap into that pool on demand, pay in tokens, and get results back without dealing with lock-in or access restrictions. Compute-focused projects like Render and Akash continue to hold strong positions within the Top 10 AI Crypto Tokens due to consistent demand.

The shift is simple. Instead of a few companies controlling access, compute becomes an open market. That is why decentralized compute is now part of the core AI stack, not an alternative.

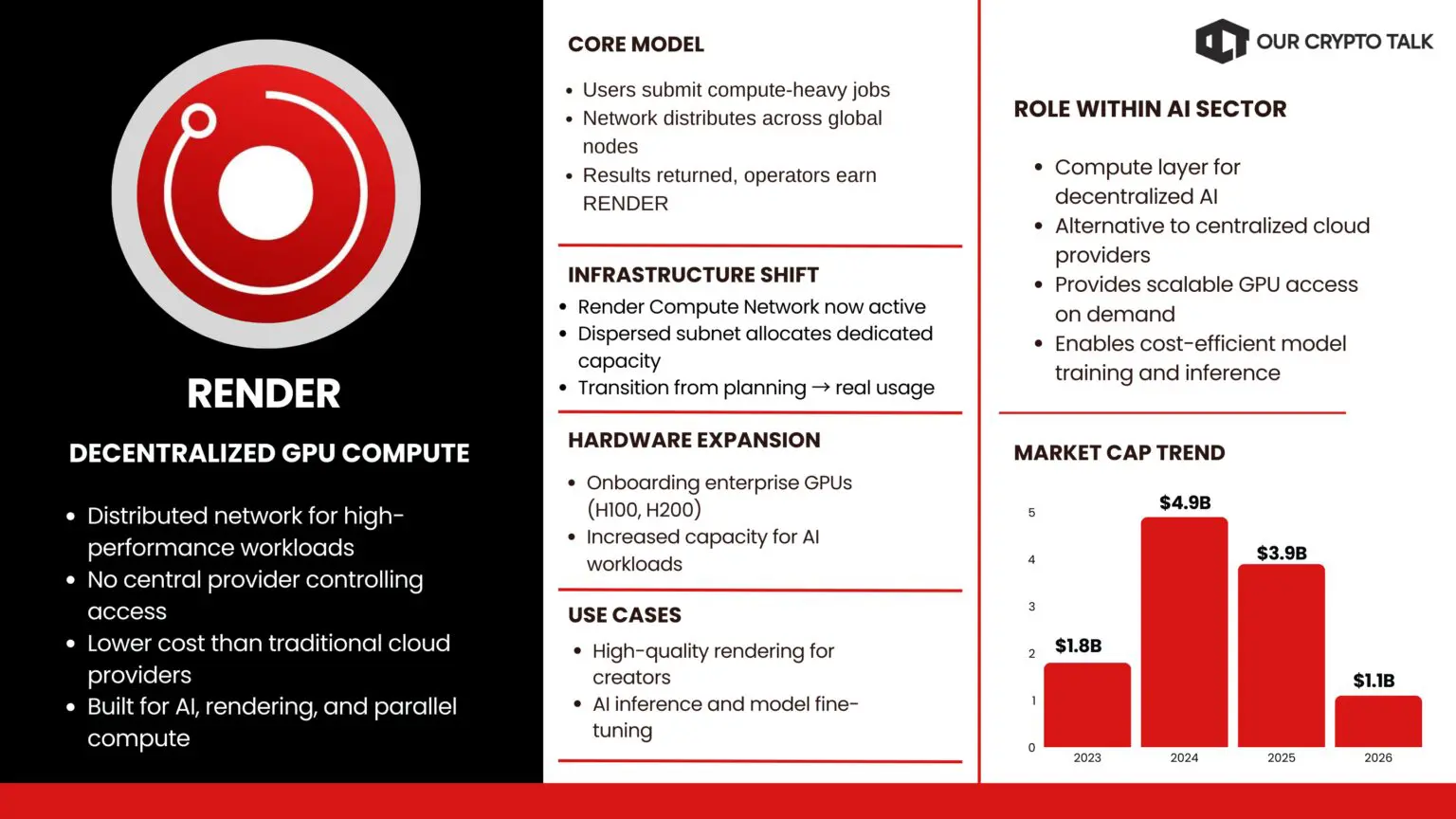

$RENDER, a leading compute network, holds a $966M market cap. Render spent years building decentralized GPU infrastructure. In early 2026, it finally reached the point where serious AI workloads could run on it. The model is simple. Users submit jobs that require heavy parallel compute. The network distributes those jobs across thousands of nodes. Results come back, and node operators earn RENDER tokens. No central provider sits in the middle.

The advantage shows up in cost and control. Traditional clouds charge premium rates for the same hardware. Render delivers similar output at a fraction of the price, often significantly lower. Users keep ownership of their data and avoid restrictions on what they can run.

That matters for both creators and AI teams. Artists render high-quality scenes faster. Developers run inference and fine-tune models without waiting for access to scarce GPUs. Sensitive data stays within a distributed network instead of being exposed to centralized providers.

The real shift came from infrastructure upgrades. The Render Compute Network and the Dispersed subnet moved from planning to active use. These changes allocated dedicated capacity for AI and general compute workloads. By March 2026, the network started onboarding enterprise-grade GPUs like NVIDIA H100s and H200s.

The move to Solana removed another bottleneck. Faster settlement and lower fees made large-scale jobs practical. Projects began running live pipelines instead of isolated tests. Usage backed it up.

Monthly throughput increased, and active node operators kept growing as payouts stabilized. Developers who once relied on cloud providers started routing real workloads through Render because the economics made sense. Render no longer sits in a niche. It competes directly with centralized providers by offering usable capacity at better pricing without giving up control. Read our full Render review.

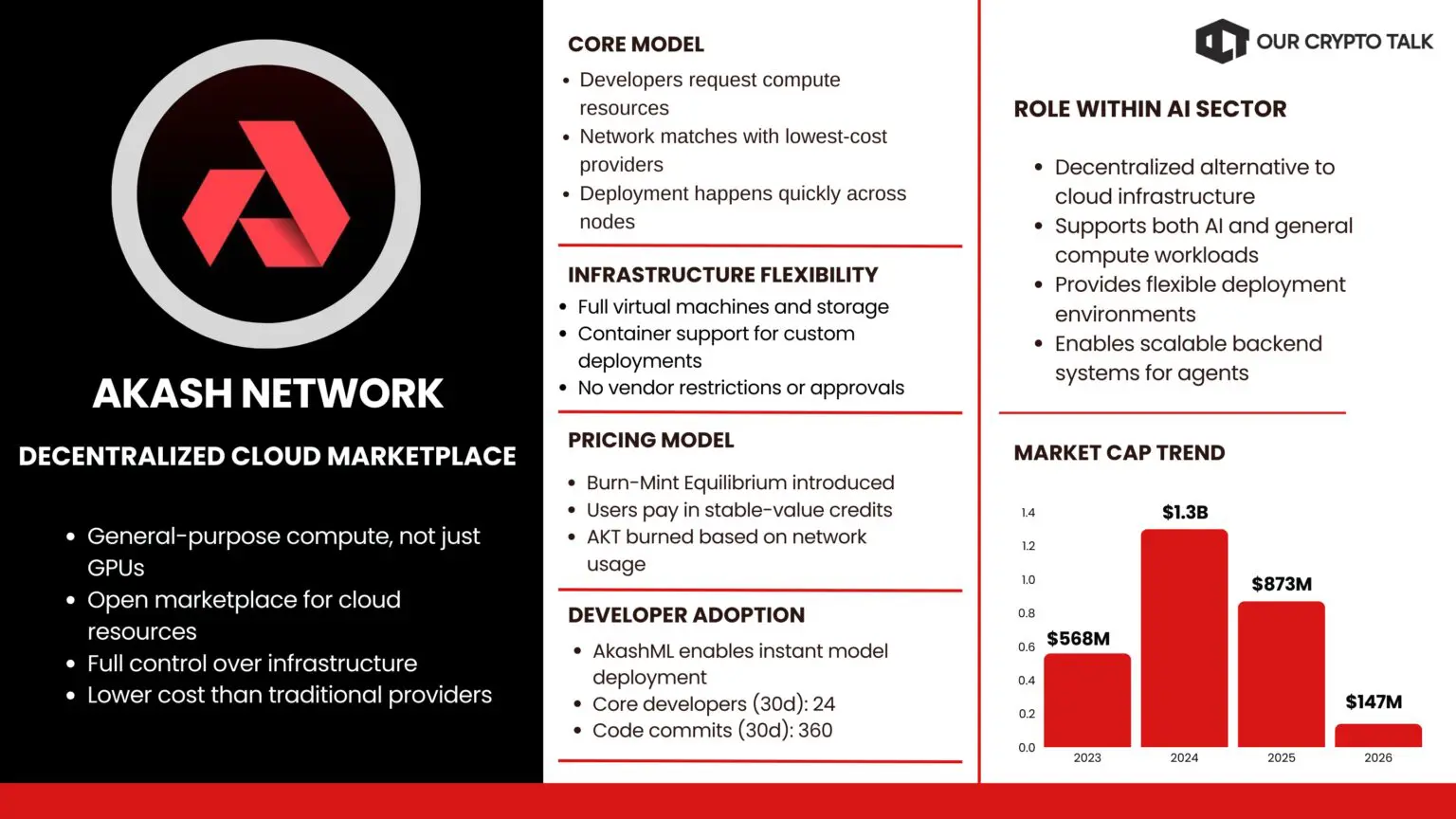

$AKT operates in decentralized compute, with a market cap of $132M. Akash approached the same problem from a broader angle. It built a decentralized cloud that handles general-purpose compute, not just GPUs. The network works as a marketplace. Developers define what they need. The system matches them with providers offering the best price. Deployment happens quickly, and providers earn AKT for running workloads.

The difference is flexibility. Users get full virtual machines, storage, and container support without vendor restrictions. They can run large models or deploy custom infrastructure without dealing with centralized approval systems.

Cost is a major factor. Akash consistently undercuts traditional providers by a wide margin. That matters as AI workloads scale and budgets tighten.

The key upgrades in early 2026 pushed adoption forward. The Burn-Mint Equilibrium model changed how pricing works. Users pay in stable-value credits, while the network burns AKT tied to usage. That connects demand directly to token economics and removes volatility for users.

Mainnet improvements also reduced friction. Deployment became smoother, and visibility improved for developers managing workloads.

On the supply side, Starcluster introduced high-performance GPUs into the network. Enterprise-grade hardware started coming online, which allowed Akash to support more demanding AI workloads. AkashML simplified access further. Developers can choose pre-configured models and launch inference endpoints instantly. That removes a lot of operational overhead.

Real usage followed these changes. On-chain leases increased. GPU utilization improved. Projects started integrating Akash as their default backend for AI systems and agents. Akash is no longer competing on price alone. It offers a combination of cost efficiency, flexibility, and hardware access that centralized providers struggle to match.

Render and Akash cover different parts of the same problem. Render focuses on specialized GPU workloads and creative pipelines. Akash handles general-purpose compute and large-scale deployments.

Most AI systems still rely on weak data. They train on scraped content or closed datasets that no one can verify. That leads to errors, hallucinations, and limited trust. The model can sound convincing, but it cannot prove where the information came from.

Decentralized data protocols fix that. They structure and verify real-world information with clear ownership and audit trails. Every data point has provenance. You know its source, its history, and whether it has been altered. This changes how agents operate. Without reliable data, they guess. With verified data, they can act with confidence.

AI without trusted data breaks under pressure. This layer fixes that by making information usable, not just available. Data-layer protocols are still underrepresented in the Top 10 AI Crypto Tokens, but their importance is increasing as agents rely more on verified information.

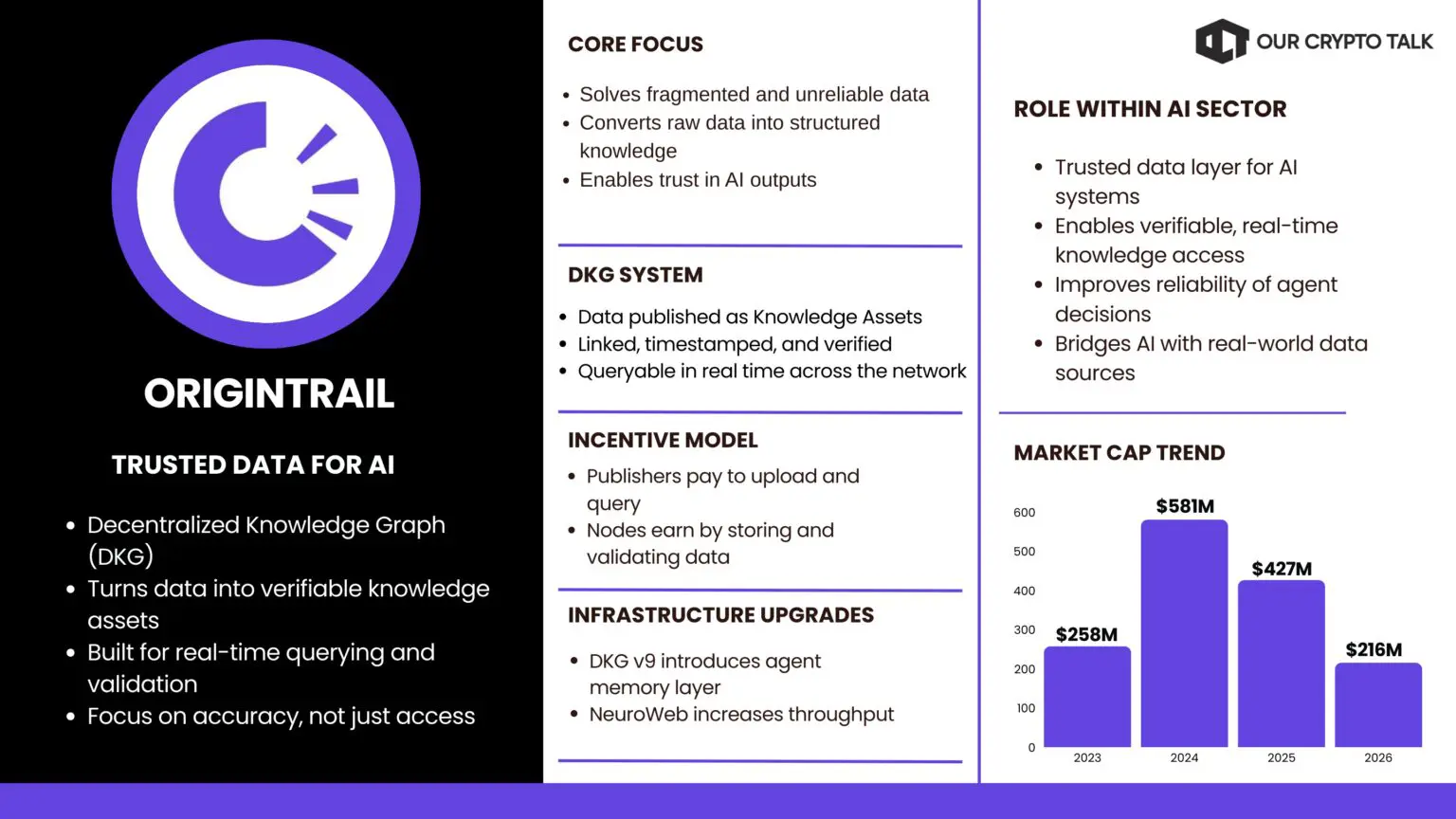

$TRAC sits in the AI data layer, valued at $171M. OriginTrail built the layer most AI projects ignored. Trusted data. Its Decentralized Knowledge Graph turns fragmented information into structured, connected assets that can be queried in real time. Enterprises, institutions, and developers upload data as Knowledge Assets. Each one is linked, timestamped, and verified across a distributed network.

Agents interact with this system like a search engine. The difference is that every result comes with proof. The data is not just retrieved. It is verifiable. The TRAC token keeps the system running. Publishers pay to upload and query. Nodes earn by storing and validating data. The network aligns incentives around long-term accuracy, not short-term usage.

The impact becomes clear when agents start using it. A supply chain agent can verify shipment data before acting. A research agent can reference sources that are actually provable. A compliance system can check claims against real filings instead of relying on assumptions. The dRAG framework pushes this further. Models can pull fresh, verified context at query time. That changes how decisions are made.

The 2026 upgrades moved this from concept to usage. DKG v9 introduced a dedicated memory layer for agents. They can now publish, verify, and query shared knowledge across the network. Performance also improved. The NeuroWeb expansion increased throughput, making real-time queries practical. Developers started integrating the graph directly into agent workflows because latency dropped and reliability improved.

OriginTrail filled a gap that was holding the entire agent ecosystem back. Compute and inference were improving, but data remained the weak point. By making data verifiable and usable, it turned agents from systems that guess into systems that can reason with context. Read our full OriginTrail review.

The AI crypto sector just crossed $17 billion as of March 17, 2026. A few months ago, it was stuck around $11 to $13 billion. That jump is not noise. It signals a shift in how this market is pricing value.

The capital did not rotate in because of another hype cycle. It moved because projects started delivering usable products. For most of 2024 and 2025, a lot of AI tokens traded on future potential. Now, users can actually interact with what these networks are building.

The biggest change came from the rise of agent-based systems.

Agent tokens are not just another narrative layer. They power autonomous systems that can execute tasks on-chain without constant human input. That includes trading, pulling data, and interacting with smart contracts. This is where AI in crypto starts to feel practical. Instead of tools, these networks offer systems that can act.

This shift is clearly visible in the current Top 10 AI Crypto Tokens, where agent-driven projects started outperforming pure infrastructure plays. Projects building around live agents started gaining ground across the board. You can see it in the rankings. Tokens tied to active systems are moving up, while projects focused only on compute or data are holding position or losing relative share.

That divergence matters. It shows what the market values right now. Utility that runs in the background is winning over utility that still needs manual execution. In simple terms, automation is getting priced higher than infrastructure alone.

Zoom out, and the structure of the sector looks different. Early 2026 did not just add billions in market cap. It created separation. Capital concentrated around projects with visible traction. The space now looks less like a collection of experiments and more like an early-stage tech stack. That is the real shift.

The AI crypto market did not grow because of a new narrative. It grew because the stack started working.

Each layer now has clear leaders. Compute is becoming cheaper and more accessible through decentralized networks. Inference is moving away from closed systems into open, competitive markets. Agents are no longer experiments. They execute tasks, move capital, and generate revenue. Data is finally getting structured and verified instead of scraped and guessed. That is what defines the current Top 10 AI Crypto Tokens. Not ideas, but execution.

That combination changes how this sector should be evaluated. It is no longer about which project has the best idea. It is about which one delivers usable infrastructure. Early 2026 made that distinction obvious. Projects that solved real bottlenecks gained traction. Compute networks onboarded serious hardware. Agent platforms showed real usage. Data protocols proved that verifiable information can exist at scale. The gap between working systems and empty narratives widened fast.

The $17 billion market cap reflects that shift. Capital is no longer spread evenly across hype. It is concentrating around networks that people actually use. What comes next depends on execution. The foundations are in place. Now the question is which projects can scale usage, maintain performance, and keep incentives aligned as demand grows.

The direction is already clear. AI in crypto is moving from experimentation to infrastructure. What happens next depends on scale. The projects that stay in the Top 10 AI Crypto Tokens will be the ones that keep delivering performance as demand grows.

Which AI token do you think is most undervalued, or which is your favorite from this Top 10 AI Crypto Tokens list? Share on X @ourcryptotalk

All the opinions in this article are that of the author and in no way are financial advice. Our Crypto Talk and the author always suggest you do your own research in crypto and to never take anything as financial advice that you read on the internet. Check our Terms and conditions for more info.